If you believe the polls, Obama is in good shape for reelection. And my model’s not the only one showing this: you’ll find similar assessments from a range of other poll-watchers, too. The lead is clear enough that The New Republic’s Nate Cohn recently wrote, “If the polls stay where they are, which is the likeliest scenario, Obama would be a heavy favorite on Election Day, with Romney’s odds reduced to the risk of systemic polling failure.”

What would “systemic” polling failure look like? In this case, it would mean that not only are some of the polls overstating Obama’s level of support; but that most – or even all – of the polls have been consistently biased in Obama’s favor. If this is happening, we’ll have no way to know until Election Day. (Of course, it’s just as likely that the polls are systematically underestimating Obama’s vote share, but then Democrats have even less to be worried about.)

A failure of this magnitude would be major news. It would also be a break with recent history. In 2000, 2004, and 2008, presidential polls conducted just before Election Day were highly accurate, according to studies by Michael Traugott here and here; Pickup and Johnston; and Costas Panagopoulos. My own model in 2008 produced state-level forecasts based on the polls that were accurate to within 1.4% on Election Day, and 0.4% in the most competitive states.

Could this year be different? Methodologically, survey response rates have fallen below 10%, but it’s not evident how this necessarily helps Obama. Surveys conducted using automatic dialers (rather than live interviewers) often have even lower response rates, and are prohibited from calling cell phones – but, again, this tends to produce a pro-Republican – not pro-Democratic – lean. And although there are certainly house effects in the results of different polling firms, it seems unlikely that Democratic-leaning pollsters would intentionally distort their results to such an extent that they discredit themselves as reputable survey organizations.

My analysis has shown that despite these potential concerns, the state polls appear to be behaving almost exactly as we should expect. Using my model as a baseline, 54% of presidential poll outcomes are within the theoretical 50% margin of error; 93% are within the 90% margin of error, and 96% are within the 95% margin of error. This is consistent with a pattern of random sampling plus minor house effects.

Nevertheless, criticisms of the polls – and those of us who are tracking them – persist. One of the more creative claims about why the polling aggregators might be wrong this year comes from Sean Trende of RealClearPolitics and Jay Cost of The Weekly Standard. Their argument is that the distribution of survey errors has been bimodal – different from the normal distribution of errors produced by simple random sampling. If true, this would suggest that pollsters are envisioning two distinct models of the electorate: one more Republican, the other more Democratic. Presuming one of these models is correct, averaging all the polls together – as I do, and as does the Huffington Post and FiveThirtyEight – would simply contaminate the “good” polls with error from the “bad” ones. Both Trende and Cost contend the “bad” polls are those that favor Obama.

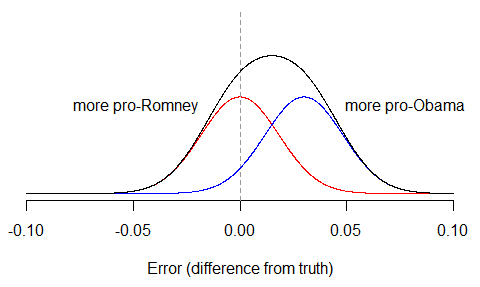

The problem with this hypothesis is that even if it was true (and the error rates suggest it’s not), there would be no way to observe evidence of bimodality in the polls unless the bias was way larger than anybody is currently claiming. The reason is because most of the error in the polls will still be due to random sampling variation, which no pollster can avoid. To see this, suppose that half the polls were biased 3% in Obama’s favor – a lot! – while half were unbiased. Then we’d have two separate distributions of polls: the unbiased group (red), and the biased group (blue), which we then combine to get the overall distribution (black). The final distribution is pulled to the right, but it still only has one peak.

Of course, it’s possible that in any particular state, with small numbers of polls, a histogram of observed survey results might just happen to look bimodal. But this would have to be due to chance alone. To conclude from it that pollsters are systematically cooking the books, only proves that apophenia – the experience of seeing meaningful patterns or connections in random or meaningless data – is alive and well this campaign season.

The election is in a week. We’ll all have a chance to assess the accuracy of the polls then.

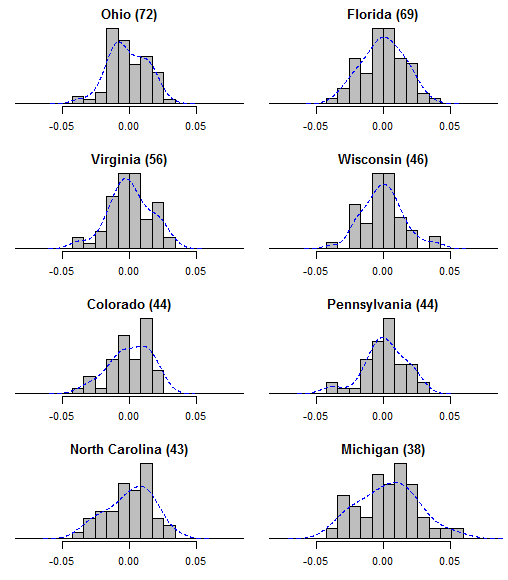

Update: I got a request to show the actual error distributions in the most frequently-polled states. All errors are calculated as a survey’s reported Obama share of the major-party vote, minus my model’s estimate of the “true” value on the day and state of that survey. Positive errors indicate polls that were more pro-Obama, negative errors are for polls that were more pro-Romney. To help guide the eye, I’ve overlaid kernel density plots (histogram smoothers) in blue. The number of polls per state are in parentheses.

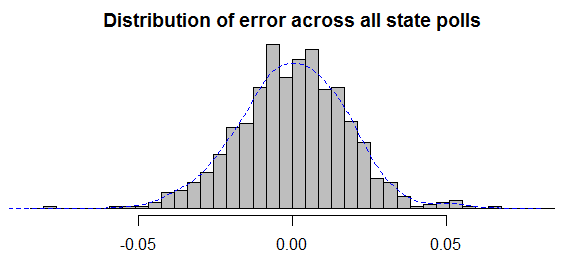

It may also help to see the overall distribution of errors across the entire set of state polls. After all, if there is “bimodality” then why should it only show up in particular states? The distribution looks fine to me.

As a political scientist, it is pretty clear to me that the mainstream media is heavily weighting its polling to favor Obama. This is seen in efforts to describe Obama as virtually impossible to beat, polls which project highly unrealistic turnout numbers for Obama given the obvious discouragement we see on the street. As a young, conservative faculty member at Williams College, I was startled by the extent to which the academic world was actively hostile to me simply because I disagreed with affirmative action and thought Bush would do a better job than Dukakis. From my perspective, it is not hard to believe that many Obama leaning polls have been biased to meet the needs of a leftist media establishment. I have seen worse things than that over my life. The good news for the majority of us, is that Rasmussen now shows Romney beating Obama in OH.

The GOP doesn’t trust government…doesnt trust science…doesn’t trust any poll except Rasmussen’s…. Apparently, the only thing Republicans seem to absolutely trust is FOX News & Rush Limbaugh…

Neither you nor Nate Silver has a dog in this fight… Your interpretations of the data and projections will either be correct on Election Day or they will not..

Me thinks the GOP doth protest WAY too much —

Funny that you spend all of your post talking about liberal polling bias and then cite Rasmussen as your example of an unbiased poll.

I do not see Rasmussen as biased. Rasmussen consistently screens for likely voters and this naturally produces results that are more favorable for Republicans than polls that simply report the opinions of registered voters. I’m following the polling data pretty closely this cycle. It seems obvious to me that a ton of the polls showing Obama doing well are based on unrealistic turnout assumptions at the state level. These turnout assuptions are what produce the “bimodal” results we are seeing among the full range of polls. My larger issue, of course, is that often what passes for science really is not science when you look into the matter more closely. Sadly, many folks in the media and the academic world cannot be trusted to be objective when an election is at stake.

Dr. Drew (makes me think of VH1 for some reason), why does Fox News no longer work with Rasmussen Polling? Word is Roger Ailes himself found them to be too inaccurate this cycle. They still use Scott Rasmussen as a talking head, but no longer use his data nor is it presented as Fox News/Rasmussen Polling. Their polling seems to lean more to the mean as a result. We’ll all find out 11/6 who’s right & who’s wrong, but I found this to be rather interesting. Any response why the fair and balanced news organ refuses to use the data any longer?

The tendency of humans to see “patterns” where there aren’t any (or where the “patterns” are themselves random) is so deeply rooted than even scientifically trained people fall for it!

The corollary to this human frailty is the uncanny “ability” of randomness to “create” extremely convincing “forms” in time and space. When time is part of the equation (as is the case in prediction), weeding out persistent “patterns” in time requires a vastly larger amount of data than most people realize, and much larger than is available when national elections are involved (there goes the Univ of Co study…)

@JCD. What would the motivation for this bias be? So we Obama supporters can be brokenhearted the day after the election? It makes no sense for polls to WANT to bias their results, because then there will be a nasty surprise come election day. If anything, anybody wanting Obama to win would bias results slightly AGAINST him so as to motivate supporters to come out to vote for him to push him over the top. Perhaps this is what the GOP is doing, Rove is no dummy and his tentacles run throughout the media and polling infrastructure. Grassroots organizers can’t afford to subscribe to private pollsters, and accurate polling is expensive, so again, why would polls feed Obama supporters numbers that overestimate his standing in the polls? Unless they are wanting to give Romney an edge. You need to take a logic class again, Doctor?

All the Obama supporters I know, including myself, rue that Obama seems to receive so little attention in the mainstream media, and we often complain about its conservative slant. The Washington Post persistently makes editorial statements on the front page which support conservative ideals. We also believe the polls have been biased to the right in recent weeks, because there’s nothing more interesting than a horse race.

“the obvious discouragement we see on the street.” You live on the wrong street. That’s the point of random samples, you don’t get ad hoc examples giving you a distorted picture of reality. Statistical inference is a delicate thing.

Astonished that there are still people that believe Obama is ahead in the polls. Nearly all polls show Romney crushing Obama with independents, and a large portion heavily oversample democrats, assuming, in some cases, that turnout for dems will rival (and perhaps even surpass) 2008. Ludicrous.

Flawed analysis such as you see here can only serve to encourage complacent dems to stay home on

election day, which is alright with me, frankly. Keep up the child work, anonymous blogger guy…

This is sort of like the lib version of Unskewedpolls.com, heh…

John C. Drew, Ph.D., how many times a day do you refer to yourself as a political scientist? I ask because I noticed that you’ve used the phrase “as a political scientist” in at least two of your comments, and your website is (of course) “Anonymous Political Scientist”. I’m not sure that the title commands as much respect as you seem to think it does.

John C. Drew – For one who is “following the polling data pretty closely this cycle”, I’m surprised that you aren’t aware that the Politico/GWU (Obama +1), Pew (tie), PPP (Obama +1), and WashPost/ABC (Romney +1) amongst others also look at likely voters. Essentially all polls this late do so. I fear you trust Rasmussen simply because you like its results. You should be more concerned that Rasmussen doesn’t contact cell-phone holders with no land line, now 35% of the voting population, and much more likely to vote Democratic than Republican. Aggregation sites like Votamatic, FiveThirtyEight, and Princeton Election Consortium use all of these polls, those potentially biased for/against each side and those more likely neutral, to come up with average and more reliable results. I suspect you will be surprised on Nov 6. Then again, you’re likely to blame the liberal media for falsifying those results as well.

..sorry, “keep up the good work”. Autocorrect..

Drew,

I have a question regarding your model, not related to the bimodal theory of this post (and I apologize in advance if I haven’t studied your methodology well enough or if the question was already answered long ago). We notice for a long time that your model predicts 332 EV for Obama. This actually corresponds to the exact score he would get if he happened to win all states where he is currently favored in your state-by-state analysis (i.e. all swing states except NC). What would happen if Obama lose some support in Florida, bringing your FL forecast closer to the 50%-50% threshold? Would the projection abruptly lose 29 EV, bringing it to 303? Or is there an intermediate regime (say, between 49.7% and 50.3% Obama support) where the projection would take an intermediate value, until his support becomes too low and the estimator would really fall to 303? Or maybe it’s just a coincidence that the current 332 value corresponds to an integer number of states won?

Thanks!

@Allan: “The tendency of humans to see “patterns” where there aren’t any (or where the “patterns” are themselves random) is so deeply rooted than even scientifically trained people fall for it!”

That’s why scientists use statistics. The only way to truly test these models is to see whether they do better than random against repeated trials. For example, PEC has done well, much better than random, but there has been only three trials, so the sample is small. So we’ll see.

Please comment on the following: Virtually all of your timelines for state polling show in early September a (post-convention) rise in polling for Obama followed by a (debate #1) fall in early October. In almost every case, it appears that the post-debate polling levels are very much like the late August figures (reversion to the mean?). In most states the figures have shown comparative stability since then. So, if one took a weighted (by population) sum of all these state polls to obtain a national figure, it should show the same trends. Yet the national polls have not shown a full reversion to the mean and, indeed, some of them now show a Romney lead. Other quantitative modelers, like Nate Silver, have commented on the fact that there is an apparent disparity between the weighted sum of the state polls and the national polls, but I haven’t seen any extended comments/guesses on what the most plausible explanations might be. I’d be interested in hearing more on this question.

“John C. Drew, Ph.D. October 29, 2012 at 12:37 pm

I do not see Rasmussen as biased. Rasmussen consistently screens for likely voters and this naturally produces results that are more favorable for Republicans than polls that simply report the opinions of registered voters. ”

(1) Virutally all polls screen for likely voters at this point. (2) You may not see Rasmussen as biased, but in 2008 their final poll had Ohio tied–even though Obama ended up winning the state by almost five points. http://www.realclearpolitics.com/epolls/2008/president/oh/ohio_mccain_vs_obama-400.html

David, Ph.D.

The motivation for bias seems obvious to me. Most of the folks in both the media and the academic world are active Democrat partisans. They have been trying to make the case that it is physically impossible for Romney to win and to keep Obama’s supporters from being discouraged by the negative economy. There is little to no downside for liberal adherents who are wrong about predicting an Obama win. I’m following the Twitter comments surrounding Nate Silver. A lot of liberals appear to be depending on Nate’s overly optimistic assessments to prevent themselves from “freaking out.”

Rasmussen leans well Right. Well known for this. Still needs to be considered, but more often its an outlier right of the average.

Dr. Drew, if there were in fact a media bias involved in polling, it would be observable in a comparison to polling compared to election results. And he various forcasters who have developed systems based upon these polls have a strong track record of predicting outcomes.

Bias certainly exists in the world, and if you are a conservative professor on a college campus, you’ve likely experienced it. Bush such anecdotes have no relevance to a determination of the accuracy of pollsters. Nor does a comparison to the one pollster you trust, unless you have some basis to support its methodology other than the fact that you like its results better than others.

John C. Dreww, “PhD”: I’ve added you to my list of folks to receive “better luck next time” emails approx. 11 pm EST Nov. 6, when CA comes in.

@Drew,

@Anyone

At the top of the page are expected EV. Are win probabilities reported elsewhere in the site?

At this stage, I find 538 predictions increasingly disconnected from reality, which doesn’t benefit anyone. It would be nice to have a more reasonable alternative.

Drew,

Interesting analysis. What would explain the very divergent results of Gallup this year? Sample definition?

Thanks

With your having a degree in political science, especially an advanced degree, I would expect you to know that the electoral college and not the popular vote that matters.

The error margin values cited are based not upon Gaussian distributions but upon Poisson distributions. One only has to look at the spectral density and the higher order statistics of the polls to see that bias is less than the noise. I would expect that a large collection would be a Gaussian distribution. I would like to try a scented Kalman filter on them next time. That method has a better chance of extracting a signal obscured by noise. I’ve been too busy to write it this time. However, Bayesian methods used on the present site should be a very close approximation.

A bimodal distribution, if it existed, would only show that the output data contains some kind of filtering. All “likely voter” polls contain filtering.

Rasmussen polls seldom contain human polling but are predominantly robocalls which cannot call cell phones. Most of the population under 30 is excluded from his polls.

My Political Science prof told me that when response rates drop below 10%, the poll is unreliable. He provided citations, but I haven’t kept my notes from almost 50 years ago. One of our projects was to conduct a political poll. It was an election year and one of the students only had 10%. Almost all of the polls, and in particular robocall polls, get less than 10% response rate. Therefore, all of them currently are unreliable.

The ensemble of them such as Dr Sam Wang, Nate Silver or the current site are more reliable because some of the system and house errors will cancel. Anybody working in signal processing will tell you that (or Nate Silver’s book). Also, polls which repeatedly ask the same group of people, such as the RAND poll or the youGov polls are have a degree of reliability and they still will have sampling error problems.

John C,

“A lot of liberals appear to be depending on Nate’s overly optimistic assessments to prevent themselves from “freaking out.””

This is absurd, as has already been pointed out, the only result of this type of conspiracy would be to destroy the credibility of the pollsters on election day.

And if you think Nate’s “overly optimistic”, you must believe this site, as well as Sam Wang’s and virtually every other pollster are flat out unhinged, as they give Obama even better odds.

The difference between these pollsters and you seems to be that they can seperate their bias from the data, whereas you clearly cannot.

Sorry, but if Romney wins the popular vote by 3+ points, which certainly WILL happen, there is no chance in hell Romney will lose the EC.

If you think otherwise, you’re simply delusional.

Please keep in mind that most polls are vastly overestimating dem turnout.

There’s also the fact that Obama is now forced to campaign in blue strongholds like Minnesota(!)

How do you explain that?

The Obama campaign knows it’s in trouble. Funny that ya’ll can’t bring yourself to admit it. You’d be better off if you faced reality.

Uh, Dave — I hate to break it to you, but I live in Minnesota. Our Senator, the great Amy Klobuchar, is up for re-election. Check out her poll numbers — no one even knows the name of her opponent. You mean to tell me folks will go into the voting booth and pull the lever for Amy but not our President? If you believe that, you are still in some nether-delusional level of grief over Romney’s imminent loss. The reason MN is in any sort of play is the Western Wisconsin TV market, which is basically the Twin Cities. Romney foolishly believes, because he’s desperate, that WI is in play. My advice to you: don’t drink on Nov. 6; your hangover could be disastrous.

Have you considered Obama buys time in Minnesota to cover markets in Northern Iowa and Wisconsin? I grew up in Northern Iowa and our local television was out of Minnesota.

Campaigning in Minnesota is not different than Romney campaigning in North Carolina or Wisconsin.

Also RMoney isn’t winning the popular vote by 3 percent.

It strikes me that the only ones “freaking out” are those who don’t understand the central limit theorem (or, apparently, the electoral college). I’ll not name names, but I’d say we all know who they are.

Besides, even if all the “bad polls” favored Obama, how according to these guys would we even adjudicate amongst them? We can’t know a priori what the “true” LV screen is, so how can we know if the supposed bimodality in error terms favors one candidate over the other? Has the concept of aggregating data to reduce bias never occurred to these guys?

Per Gallup… 15 percent of registered voters have already cast ballots. Among those, Romney leads… 52 to 45. Oof…

http://www.gallup.com/poll/158420/registered-voters-already-cast-ballots.aspx

So when does this Votamatic thing kick in?

Ok, let’s say Rasmussen is biased, it’s still less pro Romney than Gallup. Are they rigging their results too? I know Votamatic doesn’t look at the popular vote, but it sure seems that the odds of a split result should be higher than the 5% 538 gives it. Even though I don’t like it, I have to concede that right now, Obama seems to have an edge on the state level.

I continue to not be sold by the polls that asks people the same thing again and again. Do even the most enthusiatic Obama supporters think he’ll win the national vote by the 7% Rand offers ups? Maybe this is the future of polling but right now, it’s the least proven argument I’ve heard.

GS, I don’t believe for a second that Romney will win Minnesota, but the mere fact that Obama needs to extend resources there to keep it safe is telling.

They’re sending Clinton there this week FFS.

@Dave

“Sorry, but if Romney wins the popular vote by 3+ points, which certainly WILL happen, there is no chance in hell Romney will lose the EC.”

Is this a math theorem of yours? Any links you can share where that is shown to be the case? Did you prove that proposition while taking a rest from work?

Expend resources…. sorry, typing on my phone…

@Allan

It’s more believable than the numbers proffered on this site.

Dems seem to be pinning their hopes on the thought that Obama has the EC math in his favor, which, if anyone has been paying attention lately, is at best, lazy, and at worst, wrong.

This is liberal static analysis at its finest.

Dave,

Ha ha ha ha. Good ones! Early voting in IA, OH — Obama leads 2-1. That’s really all he needs. Wisconsin hasn’t voted GOP since 1984. It’s nonsense to even think of it as a swing state.

dave relies on his gut and intuitive ad hoc analysis to arrive at useless figures. I may as well ask my dog, then. It ain’t over ’til it’s over dave. Next Wednesday, we’ll see how it played out.

Dave, did you even read the Gallup article you posted? It says that the early voters as yet haven’t shifted the race either way, and that Romney edge is pretty close to where they figured he would be. I hardly see it as proof that he’s going to win by 3+ and take the EC vote. At most it’s confirming that half of his supporters who said they’d vote early (34%) did so. I’m not sure it’s the decisive story you want it to be.

Hey guys! I really enjoy your work Mr. Linzer. I find it interesting that Conservatives seem to be picking on Nate Silver when he has been giving Gov. Romney the best odds of all the pollsters. Mr. Linzer and Dr. Wang give Obama a 90%+ chance of winning the electoral vote, while Silver will most likely set Obama’s re-election probability at 75%.

For you gentlemen bemoaning the liberals “loose” hold of reality and their belief in the results of this site, 538’s, EPC and others, got to RealClearPolitics (which is NOT a fuzzy and warm liberal site) and check their poll pages.

I can almost hear them grinding their teeth in the background, but they are based on reality and their poll aggregations show (with Rasmussen duly included):

Romney with a 0.9 % (!) lead on National polls.

Obama 201 safe EV vs. Romney 190

Obama leading in OH (1.9%) +18

Obama Leading in WI (2.3%), PA (4.7%) , MI (4%), NV (2.4%) +10+20+16+6

total 271. Game set and Match

The recent moves of the campaign have the Romney forces probing new places to try and pick up a state (WI, PA, and possibly MN), and the Obama campaign matching them move for move. To me that looks like increasing desperation by the Romney campaign, recognizing that things aren’t going their way without some sort of change, and rolling the dice on longshots in increasingly blue states. The Obama campaign, taking nothing for granted, is defending against these moves, but other than that doesn’t see any need to change what they have been doing. Obama seems content with defending what his campaign must view as a winning position.

dave,

Romney put a few hundred thousand dollars in some ads in MN. Obama followed shortly after. This all happened after a poll showed something of a 3 point lead for Obama. The amount of money Romney would need to dump into MN to get it to swing his way would be substantially higher. More likely what happened was that Romney campaign wanted to test Obama’s and see how they would react. Romney has been teasing of blitzing PA, but it’s pretty clear to them now that Obama would respond in turn if he was willing to respond to the even safer MN.

@Dave

When you say “It’s more believable than the numbers proffered on this site” I take it you mean the numbers in this site are nonsense.

Dave, the guy who runs this site is a conservative, like you!

I also have the hunch that the numbers here and in 538 are unreasonably favorable to Obama, but hunches are not a good way to assess reality :)

As I’ve said before. I think the Votamatic model is overly optimistic about Obama’s chances. The latest report from Rasmussen now shows Romney winning the electoral college at 279. As predicted, it looks like the undecided voters are turning against the incumbent.

John, I realize you really, really, really, really want Mitt to win, but why is it scientifically more sound to put all your stock in one poll (Rasmussen) that’s proven to be inaccurate and biased in the past over sites like these that take a variety of polls into account?

@ John Drew

You are pointing to the one aspect the model in this site and probably others are getting terribly wrong, namely the treatment of undecideds as equal opportunity breakers. This could be fixed very easily in Linzer’s model by a simple math change in the set up. As is, the predictions here, much that I like the underlying set up, are overwhelmed by the noise of last-minute breakers’ uncertainty.

There is reason to believe Intrade evaluates risk much better than historically-based models and that is the reason why Obama shares sell for about $0.60 rather than $0.8!

John C. Drew, you are far too reliant on Rasmussen. While they had a very good track record in 2008 at the national level, they weren’t particularly great at the State level. They had Ohio tied between McCain and Obama, for example, when Obama actually carried the state by about 4.5%. They had McCain as +1% in Florida when Obama carried it by about 2%. They were also off by 5% (in McCain’s favor) in Colorado. The mean/median of the aggregated polls was a much better predictor than a single poll.

@Allan. Perhaps things are different this time, but without further data one is supposed to hypothesize a 50-50 split on undecideds. It’s statistics. Until there’s data to prove otherwise, then the safest best is to assume even odds in that segment of the population. That’s just statistical inference at work. But historically this even odds tends to be the way it plays out, at the electoral college level at least, where it counts. We’ll see who gets to crow soon.

@JCD: Rasmussen is overoptimistic about Romney, PPP is overoptimistic about Obama. Or are they? Who knows? Who cares? Aggregate polls are used to eliminate house effects that individual pollsters must have. Rasmussen always seems to be about +2% further into the red zone than the median, so I expect the 279 to be over confident. The EV may be kinda close, but maybe not after this storm. And Friday’s jobs numbers. Voodoo economics and market libertarianism failed. I almost wish Romney would win just to put the nail in the coffin lid. Except I want to retire with an account that’s worth something, and a Romney win would be awful for the country as a whole.

@Allan

Intrade, while it was accurate in the last election, was still fairly conservative in its probability estimates. Sam Wang has written about it at the Princeton Election Consortium. If you are truly interested in how people are betting on the election, it’s best to look at an aggregate.

http://www.oddschecker.com/specials/politics-and-election/us-presidential-election/winner

Currently, Obama leads in just about every betting site by a percentage greater than at Intrade. Most fall somewhere in between 73% and 77% odds for an Obama win. This is more in line with predictions made by FiveThirtyEight and Pollster, though below Votamatic and PEC.

“As a young, conservative faculty member at Williams College, I was startled by the extent to which the academic world was actively hostile to me simply because I disagreed with affirmative action and thought Bush would do a better job than Dukakis. ”

ooops.

@ Trim

Intrade makes it very difficult for investors to move quickly to exploit the differences with other sites (it takes DAYS for checks to clear, etc.) Still, it is a bit of a mystery why the other betting sites are so much higher. It could be that Intrade attracts more serious investor types, while the other sites attract betting types who are in mainly for the thrill. Baffling, something that should be analyzed.

@David, PhD. I would like to see Romney win because I believe the economy is going to tank regardless of who is elected and with Romney at the helm the whole world will be against the Republicans. I would expect his behavior would replicate Hoover (another successful businessman) and we would be in a depression that would make the 30s look like great times.

But, I don’t want to wish that on to the country. I think Obama will do a better job of handling the crisis and minimizing the impact.

Republicans have a huge stake in ‘delegitimizing’ Obama. Witness the birtherism, racism, etc. of the past 4 years. (Delegitimizing makes the McConnell/Cantor universal obstructionism seem less unpatriotic, as well.) When Romney (probably) loses, this anti-quant activity will provide another bit of support for denying Obama’s legitimate right to be President.

Furthermore, it is part of the right–wing mindset to deny such stuff as quantitative analysis, statistical modeling, etc., since the data and models are telling them something different from what they KNOW is true from Faux News and Rush. It’s exactly the same process as accusing climate scientists of cooking the books. When scientific methods tell you something you don’t like, just attack the messenger.

All zeese years later even az I look down from ze Heavens, you Americanz still amuze me zoo much! Monsieur le John Drew, PhD, I zink you are in for ze big disappointment on ze election day, zo as you Americans say, don’t have ze bovine when le Gouvernour Romney doezn’t even win le Florida.

@Allan

I think you are putting too much trust in Intrade. By comparison, it is the outlier of the group. It also has a much smaller pool than many other sites and has been subjected to partisans trying to change the dialog of the current state of the race (shown in 2008 and likely a couple weeks ago when Obama’s Intrade dropped over 10% because of a single investor). Betfair, by comparison, is not open to US credit card numbers and thus cannot be as easily swayed by US partisans (liberal or conservative)

@Jake As a political scientist, I see myself as a data-driven public intellectual along the lines of Charles Murray or James Q. Wilson. In my experience, it is my conservative Ph.D. friends who are doing the original, path-breaking work in social science. To suggest that the left is more friendly to statistics than the right strikes me as unhelpful and even a little peculiar. In general, it is young conservatives like Charles Johnson who are breaking new ground in reporting previously neglected facts about Barack and Michelle Obama and the key details of the up-coming election. See, http://www.theblaze.com/stories/a-detailed-look-at-obamas-radical-college-past-and-were-not-talking-about-barack/

I’m not sure how we can have a functional democracy even when the supposed intellectuals on the right cannot argue rationally or with anything that approaches reason.

Bad science is arriving at a conclusion then looking for data points to support that conclusion. That’s exactly what “John Drew, Ph.D” is doing as is most of what I read from on the right. Our system of government needs two functional parties, and right now the GOP has gone off the cliff.

The Blaze??? You are joking, right?

Apparently they’re giving out Ph.D.’s in political science to conspiracy theorists these days.

QFT: “Bad science is arriving at a conclusion then looking for data points to support that conclusion.”

I certainly believe that Obama is the favorite even though I don’t like it, but I’m not sure that I buy into the argument that Rasmussen has to be less accurate because other sites like this one use many polls. While the conspiracy theorists may turn out to be right about them, it seems to me that they’re the only outfit that seems to poll everywhere (every state, nationally every day). They have all that data, not all of which they share. They can certainly look at the other polls as well, plus I can’t help but believe that they have a mathematician or two on staff. So I’m not sure they’re predictions wouldn’t be as well based as anyone elses. As for the conspiracy theorists, it does seem that if they’re really wrong, they have a lot more to lose then most other predictors.

Allan Marlow,

PEC attempted to include a factor for the perception that undecideds break for the challenger in his 2004 predictions. PEC’s computer simulation picked the EV exactly, and the subjective factor for breaking to undecideds, which called for a Kerry win, made it miss. After that occurred, they studied the data and determined that there is no data to support the hypothesis.

John C. Drew,

The positions you take here, which are purely subjective, are completely at odds with your description of being a data-driven intellectual. For the poll aggregating analysts to be “wrong,” their data would have to be faulty, because their math is mostly sound. And the polls when considered in aggregate have been a very reliable predictor of which candidate will win. Individual polls like Rasmussen, on the other hand, have a much more mixed history.

The Gallup numbers for early voting really are telling us nothing. Since the laws for early voting vary so much from state to state, we don’t know if only solid Romney states are voting early, or toss-up states, or solid Obama, or what the mix is. If the early voting states are a few toss-up (NC, OH, IA), some solid GOP (TX, AR, AK, WY, etc…) and only a couple Democrat (OR, WA), then that’s a very different number you’ll get out than the final vote numbers. We know that PA doesn’t have early voting, and they’re likely going Democrat, so those people can’t knock up the Obama numbers.

The real analysis at the end isn’t if Silver and others get Obama winning wrong, but how they get it wrong. Did they get every single state but Ohio or Pennsylvania right? Well then their model might be OK but state polls in those states were bad. Did they miss every state by 3%? Then that’s a totally different story. But just saying “Obama lost, all those guys were wrong” is too simple an analysis at the end, just like saying “Obama won, they were all right!” is incorrect if Obama were to lose PA but then win TX to somehow win.

I just noticed that Massachusetts is the state where president Obama has the strongest support. If Mitt was such a great governor, how is that possible?

By the way, John C. Drew, in general conservatives by definition are anti-original. All they do is criticize ideas that challenge their dogmas and often with delusional and sometimes dishonest arguments. This kind of play has been repeated over and over through history.

@John Drew

If what you say is correct, and I don’t have any reason to doubt it, political science must be a unique case of conservative representation in science. I say this because, as a very experienced scientist myself (an older guy with a rich trajectory in several fields), the vast majority of scientists are liberal, at least in the social sense of the word, and are also atheist or at least non-religious.

To most sophisticated people, today’s conservatism is so imbued with dogma that it becomes almost repugnant to the thinking brain. In all honesty, the true voices of today’s conservatives are the likes of Limbaugh, and nobody can be more foreign to a thinking individual than Limbaugh (or Beck, etc.)

I have watched in amazement how conservatives have tried to delegitimize Obama, in ways that challenge any measure of logic, common sense, or morality. At the same time, I have watched Obama stoically take in the punches, in a situation where most of his attackers would have conceded and simply left the scene.

I have a unique perspective on this because I am not American, and therefore I am not emotionally vested the way Americans are. I am a foreigner who has lived in this country (and a number of other countries) for many years, long enough to know it extremely well, and long enough to have seen it morph, in important respects, from a first-world nation into something dangerously close to an over-sized third-world country.

What is happening to Obama tells the world that this country has changed much less than most Americans would like to think since Marian Anderson was forbidden from singing in Constitution Hall. Racism isn’t pretty, too bad many of you don’t realize that.

Dr. Marlow:

I too am a Ph. D. (in Physics) but usually don’t need to flash it all over posts like someone we know… Like you I was born somewhere else and have been here for more than a quarter of century.

I concur with your assessment.

The public discourse has degenerated to the point that, when deciding to apply for citizenship, I declined. The thin veneer of civility, especially since, gasp !, a Black Man won the presidency is really falling off.

A poll conducted recently shows that despicable racial attitudes are still lurking very near the surface.

The tremendous effort by a section of the public to de-legitimize at all cost a president has been hard to watch and dovetail with those attitudes.

Just a small sample: trying to find out the grades that someone received as undergraduate, when the same someone was elected, by his peers, as the president of Harvard Law Review (a publication know for his soft and fuzzy standards…). It must have been a clear case of Affirmative Action…

I am not that happy with Mr. Obama performance so far: a bit indecisive and really following the “cookbook” of how a politician ought to talk and act. I think he does not fit him well, and wish he would step out of that “persona” and be what glimpses show through when he forgets to act “political”.

But the other option? Please, NO!!!

We shall see, and hope…

You should comment at PEC, Dr. Marco.

much less *ressentiment* and phd flashing.

;)

Hello Wheeler’s cat:

I lurk there most of the time (I am an original lurker since 2004) and I have a few posts .

In fact, I came here from there.

Funny how Dr. Wang’s Meta Margin and Dr. Linzer’s prediction seems to converge now…

But you know them scientists, they don’t get it, do they ;)

Can everyone please stop citing his/her degree in his/her name? It is extremely pretentious. The logic of your argument should speak for itself.

11:38 CST: The Princeton.edu site appears to be down. At least here in Illinois.

Hey Dr. Linzer, that’s a remarkably stable EV estimate. I read over your method. It will be interesting to see how well the recipe holds up. It’s interesting to see Nate’s model and Sam’s model both beginning to pull toward yours.

It is difficult to underestimate the power of habit, so it will be interesting to see how well the past predicts this election.

“…averaging all the polls together – as I do, and as does the Huffington Post and FiveThirtyEight – would simply contaminate the “good” polls with error from the “bad” ones. Both Trende and Cost contend the “bad” polls are those that favor Obama.”

Thank you for a well reasoned and dispassionate refutation of Trente and Cost’s contention. And I realize that such a refutation is necessary. But, there really should be a more peremptory mechanism for flagging articles as not worth a read when they contend that polls that favor Obama are bad without any justification.

Vijay wrote: “Can everyone please stop citing his/her degree in his/her name? It is extremely pretentious. The logic of your argument should speak for itself.”

Annoyingly pretentious, sure. Also perhaps a hint of insecurity that without the trappings the argument will have less force.

@Vijay, Partha Neogy

Citing one’s academic credentials is not a sign of insecurity. It is a form of reassurance that the person has knowledge of the subject at hand. Opinions are not all of the same quality even if today’s culture, especially in conservative circles, says so.

If a truck driver calls global warming a hoax, however a nice person he might be, his statement doesn’t carry the same weight as if Prof. Hawkins called Global Warming a hoax. This is something that is totally lost among working class conservatives, and to some extent even among liberals.

If Drew Linzer didn’t have a PhD from a reputable institution in an field strongly influenced by statistics, I personally wouldn’t bother to read his opinion and much less his paper.

Limbaugh’s opinion that the polls are skewed is worthless not because Limbaugh is a conservative, but because he doesn’t have a clue about math.

I agree that trumpeting around one’s degrees is not in the best of taste, but it is even in worse taste for people with no qualifications to expect their opinions to be equally valued.

In other words, the idea that one’s ignorance is as good as someone else’s knowledge (also known as anti-intellectualism), is the worst and most damaging form of arrogance.

“Partha Neogy

October 31, 2012 at 10:46 am

Vijay wrote: “Can everyone please stop citing his/her degree in his/her name? It is extremely pretentious. The logic of your argument should speak for itself.”

Annoyingly pretentious, sure. Also perhaps a hint of insecurity that without the trappings the argument will have less force.”

Well, my motivation was to counter JCD’s attempt to use “appeal to authority” to sway opinions. Rhetoricians do such things. I feel my logic did speak for itself, but since JCD seems to think the fact that he has a Ph.D. (in political science!) gives him some special insight that the rest of us don’t have, it was called for. I bet he doesn’t understand anything about Linzer’s paper.

Anyone know what is up with the Princeton Election Consortium website? I have not been able to access it for most of the day. Thanks to 538, PEC and Votamatic I have a clearer understanding of what is going on. Thanks Drew for your efforts.

There are many instances of people with advanced degrees whose entire careers are nothing but sheer and pure nonsense (Chopra is my favorite example, listening to the guy talk about quantum physics is funnier than GW Bush giving a dissertation on world history.)

If there is any virtue to Limbaugh and Palin is that they take pride in their ignorance, rather than disguising it – thus their popularity, in my opinion.

“Allan Marlow

October 31, 2012 at 12:48 pm

There are many instances of people with advanced degrees whose entire careers are nothing but sheer and pure nonsense (Chopra is my favorite example, listening to the guy talk about quantum physics is funnier than GW Bush giving a dissertation on world history.)”

:) That’s true. That’s quite an act of spiritual kindness, making W sound learned. Is our mystics learnéd?

BTW here is a link to JCD’s publication list on google scholar, his thesis and an article 15 years later on management ethics. Plus one citation in someone else’s paper. Not that publications should necessarily be the ultimate measure of a human’s worth, but still …

John C. Drew is wagering everything on next Tuesday. I wish him luck.

I hope my credentials are in order to be admitted to this most distinguished club. Merely popping by to note that even the malcontents at Real Clear Politics are now calling the national race a tie. Cheerio, lads!

@ David

Understood. My remarks about annoying pomposity were directed at the commenter who set the trend. Sarcastic responses to that trend are clearly justified.

I thing Professor Crew’s comments are misdirected. Votamatic, PEC, and 538 aren’t mainstream media, even though 538 is loosely affiliated with the Times. These sites are basically data aggregators, and the data they are crunching are political polls. They aren’t exhibiting bias, they are simply presenting numerical results. Crew may have a point about media bias, although it certainly goes both ways. For every outlet that reports Obama’s alleged gains, there are Fox, Limbaugh, etc to say why they are wrong. That’s why we come to sites like this. If Crew wants to complain about MSM, fine. But that isn’t what’s happening here. That’s why we visit these sites.

Today, Intrade saw a rally that moved Obama prices close to 70% within an hour. Fascinating to watch.

If one asks the question what is the probability that polls turn out to be non-trustworthy, then that probability is approximately 30% – the same as the risk of betting for Obama.

For those who believe there is something wrong with the predictions and Romney will win, the time to invest is now – Ireland is a wonderful place to collect the proceeds.

Betfair has Obama’s chance at 73.53% now. And the 95% bars continue to tighten in Drew’s model.

A PSA for the votamatic commentariat:

Dr. Wang has a harbor of refuge at dKos for those going through PEC withdrawal.

http://www.dailykos.com/story/2012/10/31/1153374/-Princeton-Election-Consortium-a-technical-announcement

Here’s an article that articulates my take on the bimodal character of the current polling results. http://www.breitbart.com/Big-Government/2012/11/01/Eight-reasons-Pro-Obama-Polls-Are-Wrong #tcot

@Alexis-Charles-Henri Clérel de Tocqueville

Monsieur, mon cher ami et frère gaulois, un grand merci pour le commentaire suprêmement brillant.

John Drew has now linked to both the Blaze and Breitbart.com. If this is what he thinks about when describing “conservative pathbreaking research,” then I desperately hope that he is not teaching students.

John, that’s not very articulate. For example, reason number 3 says that the “pro-Obama” polls are wrong because RCP (one of those polls btw) says Romney is “more favorable”. Just weird and illogical. Another ‘reason’ given is that Romney is doing well in early voting. But is there a correlation between the final tally and the early voting tally? I don’t think so. But if you think so, give references, please, that calculate the correlation.

I think the guy who wrote that is going to be choking on a chicken bone come Wednesday morning.

“A necessary but not sufficient condition for a symmetrical distribution to be bimodal is that the kurtosis be less than three.” Kurtosis should be an easy thing for Drew to compute with his data; if no set has kurtosis less than three, well then we’re done. If there are, we can go farther.

Some tests were discovered by Frankland and Zumbo. Begin here: http://educ.ubc.ca/faculty/zumbo/jmasm_paper.pdf and then here: http://educ.ubc.ca/faculty/zumbo/papers/Frankland_Zumbo_2009.pdf

Breitbart.com ??? Seriously? No offense, but that’s like reading the National Enquirer or Globe of politics. They are so far to the right, they are off the charts. More balanced websites completely refute the bimodal claim. I have degrees too, but I defer to non-partisan websites run by intelligent people with an expertise in statistics. Reading Breitbart is like reading Drudge or Daily Caller– they see what they want to see and are often wrong. I’m sure there are examples on the far left as well.

The argument that the polls are wrong, can’t be made right now. It can only be made after election day. But one would say the polls are wrong because … in the past they have been wrong.

What’s his name, the nameless one, has published actual data showing how biased and wrong they were both for national polls and state polls. The real question is, “How wrong will they be this year?” and “Whats the probability that their wrongness is more than the size of the predicted margin?

Now the breitbart article says, “Polls Show Republicans Will Turn Out In Record Numbers.” Okay. So the polls that are wrong also predict a record Republican turnout? Or *that* poll is right, and all the rest are wrong? John Drew, your Ph.D is starting to lose a little of that old luster.

Most of the poll aggregators, including Drew, have done some pretty good work to scrutinize the polls for bias. Accuracy is different than bias. It’s quite possible that some of the state poll averages that are converging on Obama leads > 2.0 pts on election day, might prove wrong, but that is not consistent with the historical trend. The historical trend is that a poll averaged lead of > 2 pts on election predicts the state winner with good accuracy.

BTW, poll aggregators have attempted to reconstruct national numbers from state numbers. These have also shown Obama leads, even when 2004 turnout models are used. You can cook up a new turnout model to argue that the state polls are wrong, but you probably shouldn’t cook up a turnout model that is more improbable than the poll averages. Sure, if every Republican in Ohio votes, and no Democrat in Ohio votes then Romney will win. And you can’t just wave your arms and say, “Whoa, a whole buncha new republicans are voting in Ohio this year.” You should go with a turnout model that’s within historical norms and see how that plays.

@Marie-Joseph Paul Yves Roch Gilbert du Motier, marquis de La Fayette

C’est un honneur d’être à votre service.

Ad hominem attacks on Breitbart are actually somewhat persuasive, but even looking at the article and addressing its merits, there is no substance, but instead, a series of subjective perceptions, that if true, would conflict with the polls. With Prof. Drew, I suspect he is a troll, but I can’t be sure based on some of the comments I’ve seen from many republicans, who science, data, and evidence do not seem able to reach.

Having said that, if there is a systematic error in the polls, then it is probably in the likely voter screens. But the analysis on Breitbart’s site can be boiled down to this: the results of the polls disagree with my subjective beliefs so I reject them. If there is a problem, its because the well-established screens used by many pollsters no longer work. I’d be curious to see an actual analysis of the questions asked and an explanation why those questions no longer work after having been accurate in the past.

The ONE aspect that all sites concerned with Nov 6 predictions are leaving out of serious analysis is the black swan event of a Romney landslide. They all agree the probability of this event is relatively low (30% or less), but none (to my understanding) attempt to explain it at the model level. A simple regression-based structural model points to a Romney landslide (the Univ of Co report), but doesn’t explain it.

Explaining an unlikely event at the model level means you work with your parameters to see if anything you already have in your model can capture such an event, or to find out whether you need to ad something else.

Models that allow probabilities to “drift” (as in this site or in PEC) don’t explain black swan events. To explain a black swan effect, modelers must be fancier in the way they treat their probabilities, and must work in concert with the betting markets (the only markets on outcome probabilities).

I am fascinated that so little of this goes on in the models open to the public. This event, if it happens, will hurt the reputation of stat and math modeling in Political Science, just like it hurt the valuation models in finance when they failed to characterize the rare and devastating event of 2008.

Black Swan events. Or, given enough time, a monkey typing on the typewriter will write the works of William Shakespeare. But that’s an incredible amount of typing that goes on. It basically says that outliers occur, stuff happens (what kind of stuff?). Calling it a “black swan” was a good marketing ploy. Sure there’s the outside chance that Romney wins in a landslide, kind of like how Truman beat the odds. But Truman was the incumbent. I don’t know why Republicans refuse to remember that episode in history, other than it was one of their own who won so they don’t remember that the flipped coin is slightly partial to the incumbent.

I don’t see any black swan swooping in on this one. There are no signs.

The 2008 bursting of the housing bubble was foreseeable back in 2002, it was no Black Swan event, not really. It was entirely predictable, and Greenspan just ignored the data. The fundamentals couldn’t carry the price so everybody was shadow banking on values that weren’t real. Maybe something similar is happening here, but then there would be similar warning signs. The fundamentals (state polls) look good, and history suggests their fairly accurate as the election comes to an end.

I don’t believe that the 30% chance of a Romney victory is a 30% chance of a Romney landslide. And I’m not sure that would qualify as a Black Swan event, at least not in the respect that Taleb uses the term. Even a 1-2% chance, if predicted, would not qualify and something non-calculable or unpredictable. A black swan event wold be Romney winning California.

Further, I think that the chance of a Romney victory is explained at the model level. For example, this site calculates a 95% confidence interval to pick the winner in each state. That includes information about the likelihood of a result falling outside of the interval. In other words, the same method he uses to calculate the probability of an Obama victory calculates the probability of a Romney victory. There is zero probability that someone else will win.

True, a 95% interval still allows, average, 1 out of 20 repeats to fall outside that range, perhaps above or below. In other words, an outlier can occur at any time, but, on average, not frequently, and even then you can have long runs of outliers without violating the law of large numbers. No model can eliminate error, but one can minimize the chance of it, at the cost of efficiency and economy.

I can’t believe you guys are feeding this Dr. Drew troll so well.

All of your well reasoned arguments and incontrovertible evidence will only backfire, entrenching him more strongly in his beliefs:

http://www.boston.com/bostonglobe/ideas/articles/2010/07/11/how_facts_backfire

He’ll only reconsider his stance on information from a figure he respects, a shift in the sentiments of this self identified peer group, etc. If you really do care about convincing him (idk why you would care about this fool), you’d be a lot better off ingratiating yourselves to him, learning who he respects or other tried and true methods of manipulation. ….assuming he’s even serious.

Markets don’t agree with the 1 out of 20 prob of Romney winning, that is why Obama contracts are priced the way they are. That is what has been left unexplained by the models. The market is clever enough to tell the miscalculation is there, but not clever enough to say where it comes from (poor polling, etc.) That is where the modeler must step in and set up his model such that it can accommodate the unexpected event.

Allan Marlow,

I’m not sure we should expect models designed to predict elections based upon polls to also predict the results of betting markets. And I’m not sure that we should expect a divergence between the two to be an indictment of either. For one thing, for the divergence to present an issue with the statistical models, the betting markets would have to be more efficient than the polls. They may be, but historical indications are that they conincide with the “odds” predicted by poll aggregation along with a bias toward the trailing party.

Interestingly, there are people who suggest that asking people who they think will win an election is a better predictor of the actual outcome that asking people who they intend to vote for.

The 1 in 20 is the error margins shown in the graph at the top,at least that’s what I was talking about. Which often leaves room for the unexpected event. In this case, the chance of a Romney win, according to PEC is somewhere between 1% and 4%. So,the unexpected event is accomodated. It might occur, it’s just very unlikely. If this were a game like craps where you can repeat the game as long as your bank account holds out, then don’t bet Romney. But this is a one-off game. So interpreting a 4% chance of winning as NO chance is just silly. I think you’re neglecting two things: firstly, the fact that the models do include the unexpected events, otherwise they would say the chance of Romney winning is 0%; and, secondly, that these are probabilities, which have nothing to do with certainty, just what should happen when the game is played repeatedly ad infinitum. Even in a coin toss, tossing the coin 4 times, with a 4% chance of tossing heads, you have a 15% chance of tossing 4 heads in a row. It’s unlikely, but it’s not a sure bet that tails will ever be tossed. So the 15% accommodates for the unexpected event that 4 heads in a row will be tossed.

In physics, a probability of zero doesn’t mean “won’t happen” it just means “very unlikely to happen.” But no one can predict a specific outcome of a specific future event (sorry, psychics). Things that shouldn’t happen do, but that’s because, let’s face it, we don’t know as much as we like to believe we do. Even the little we do know people misunderstand or just plain discard.

As far as markets go, they are not necessarily trustworthy. The market got the housing bubble very very wrong. And the internet stock bubble before that. Minsky says these events can be expected, due to the structure of the system. You just have to sit back and watch them develop. Greed can, does, and did, overrun reason.

Commentor reminded me of one thing more about the markets: they carry transaction costs, which the trader factors into the cost of the position, creating a bias towards the center if most of the positions held are small. Voting surveys do not have this issue.

This is what we have: Models that predict Obama winning with probability > 95%, and markets that predict Romney winning with prob 35%.

One of these is wrong.

The guys doing the models defend what they do, and blame the markets for being inefficient, rather than trying to understand where the difference comes from.

Here is the answer, if you care to hear about it. Your models are incomplete. They are not necessarily wrong, but they are missing TWO important pieces. If you had the humility to learn how markets work, you might just uncover what those pieces are and get it right.

Once the election happens, assuming Romney wins, there won’t be a way to tell if he won because of the 1/20 of your model, or because of the 0.35 of Intrade. You will say it was because of the 1/20 of your model – that will be dogmatic conservative thinking at work.

Understanding how the markets work requires a background in finance, not just statistical recipes and political science.

(BTW: Transactions costs explain about 1% in probability differences.)

“You will say it was because of the 1/20 of your model – that will be dogmatic conservative thinking at work.”

No, that is proper statistical inference at work. If you’re reading the results from the poll data, that is what you have to do. It’s call being careful, not conservative.

Your faith in the markets is touching, but again, history shows markets are geared to tend toward being irrational and inefficient, prone to speculation (Minsky), and sometimes dismissive of the fundamentals to its own regret. Eventually the market corrects, because it has to when its predictions are based on mind vapors and not solid data.

There are plenty of examples that show that large players can tilt markets (LCTM and “the Whale”). What if that is happening here? Are there any large stakes holders?

Try this method: Look at the aggregate polling graphs for each swing state and look to see if Romney EVER led at any time during the campaign. If he took the lead, even briefly by a fraction of a point, give him the state. I found that if Obama only keeps the states where he never gave up the aggregate lead (like Ohio, for instance), Obama wins the EC. How favorable do you have to make it for Romney for him to be projected the winner? How likely is it he will win states where he has never led? Just askin’.

I’m late in noticing this, but ChrisH nailed it with this comment and I wanted to highlight it.