Welcome to Votamatic for the 2016 presidential election campaign.

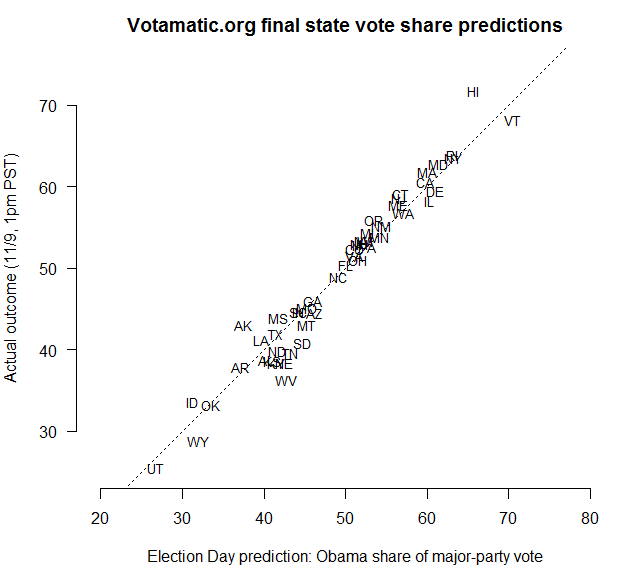

For those new to the site, I originally launched Votamatic in 2012 to track and forecast the presidential election between Barack Obama and Mitt Romney, based on some academic research I had been doing at the time. My early prediction of a 332-206 Obama win, using a combination of historical data, state-level public opinion polls, and a Bayesian statistical model turned out to be exactly correct. All of the data and results from 2012 have been archived, and can be reached from the top navigation bar.

The 2016 version of Votamatic is going to be fairly scaled back compared to 2012. I’ll still have poll tracking charts and the occasional blog post, but my election forecasts will be built into a brand new site at Daily Kos Elections that I’ve been helping to create. In 2014, I worked with the Daily Kos Elections team to forecast the midterm Senate and gubernatorial elections, with continued success. This year, we’re expanding the collaboration.

Over the next few weeks, we’ll be rolling out a bunch of new features, so stay tuned. Starting with presidential forecasts, we’ll soon add forecasts of every Senate and gubernatorial race in the nation (including the chances that the Democrats will retake the Senate), and sophisticated poll tracking charts and trendlines, all built on top of a custom polling database. The site will also feature Daily Kos Elections’ regular campaign reporting and analysis, as well as candidate endorsements and opportunities for getting involved. I hope you’ll find the site interesting, immersive, and accurate — and worth returning to as the campaign evolves.

(Sneak preview: Other election forecasters are giving Hillary Clinton around an 80-85% chance of winning. My interpretation of the polling data and other historical factors makes me a little less confident in a Clinton victory, but not much so; I’ll have more to say on this soon. Either way, the election is still far from a done deal. Flip a coin twice: if you get two heads, that’s President Trump.)

I will update the trendlines on this site every day or two, as new polls come in. Every state that has at least one poll will get a trendline. To see the polling data and trends together, go to the Poll Tracker page. For a zoomed-in view of each state’s trendline, check out the State Trend Detail pages.

The statistical model that I use to produce these trendlines has a set of features that are designed to reveal, as clearly as possible, the underlying voter preferences in each state during the campaign. Looking at the poll tracker in Florida, for example, Clinton (blue) led until mid-July, when she was overtaken by Trump (red). After the Democratic National Convention, however, Clinton’s numbers rebounded to move her back into a slight lead.

States with more polls will have more accurate trendline estimates. But my model produces a complete trendline for each candidate in any state that has at least one poll. To do this, it looks for common patterns in public opinion across multiple states over time, and uses those to infer a national trend. (This works because changes in voter preferences are largely — though certainly not entirely — a response to national-level campaign effects.) The model then applies those trends back into each state, adjusting for each state’s unique polling data. States in which no polls have been conducted are displayed as empty plots, awaiting more data.

The trendlines that you will see here track Clinton and Trump in a head-to-head matchup only, excluding third-party candidates and voters who say they are undecided. This has the benefit of removing idiosyncrasies from the polling data around question wording, survey methodology, whether a pollster “pushes” respondents who are leaning towards either candidate into making a decision, and so forth. Visually, this explains why the trendlines for Clinton and Trump are mirror images of each other: the Clinton and Trump percents have been rescaled to sum to 100%. On the other hand, this sacrifices a lot of potentially interesting information about each race. The trendlines we’ll have at Daily Kos Elections will include other candidates and undecideds.

Finally, I account for two other features of each poll. Polls with larger sample sizes are given more weight in fitting the trendlines, relative to polls with smaller sample sizes. And if a poll was conducted by a partisan polling firm, the model subtracts 1.5% from the reported vote share of the candidate from the respective party. So, for example, if a Democratic pollster reports a race tied at 50%-50%, the model treats the poll as showing a three point Trump lead, 51.5%-48.5%. Those are the only adjustments I make to the raw polling data, assuming that all other survey errors will cancel each other out as noise.

See you soon, over at Daily Kos Elections!